The Unseen Hands: How Faith and Ethics Shape My Approach to AI and Security

The unseen forces behind tech decisions

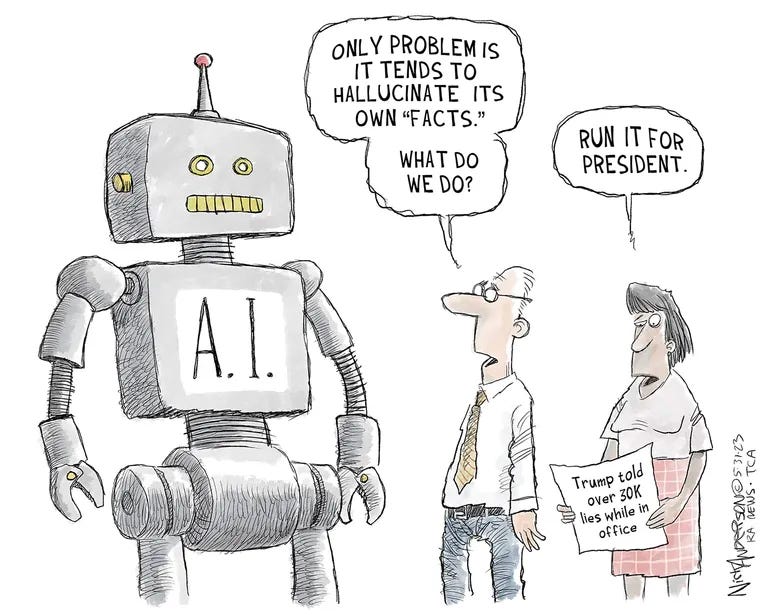

Technology presents itself as neutral—a data-driven, objective force. We, the people, think differently. Take AI, for instance. Conversations around it reveal significant pushback. I’ve heard it quite a bit from my company’s marketing department.

Behind every AI model, security system, and digital privacy policy are human decisions shaped by values, biases, and unseen ethical frameworks.

For me, faith and ethics provide the foundation for engaging with technology, including AI and security, as I navigate them daily.

Faith and ethics aren’t abstract concepts. They’re guiding principles influencing decisions from development to production.

Faith, ethics, and technology: a personal lens

Whatever I do, I approach it with an ethical filter—a byproduct of my religious upbringing and determination to be decent.

As a member of the Church of Jesus Christ of Latter-day Saints, I hold that integrity, transparency, and respect for human dignity should underpin my work. So, I apply my ethical filter, whether writing technical documentation or teaming up with ChatGPT to complete a ticket.

In an era when AI is reshaping jobs and industries, and we trade security and privacy for convenience, we can’t disregard ethical considerations.

January is when I have my annual review with my boss. Our company uses a form that we both complete. This year, I prompted AI to help me with my input. Then, I had a call with my boss to review it.

“Did you use AI for this?” he asked.

“Yep,” I responded without hesitation. “Of course I did.”

“Yes, me, too,” he replied.

When you hear that, what’s your initial reaction? Did you think I should have felt bad for admitting to my boss I used AI? Was I cheating? If that was your initial thought, why? Shouldn’t I leverage it to quickly complete my evaluation and return to my crucial technical writing work? And how do you feel about my boss doing the same?

AI and security: an ethical playground

Artificial intelligence and security intersect in ways that bring up ethical questions.

Was I cheating by using AI for my annual evaluation? That’s a basic question, and I’ve determined that the answer is no.

The ethical question is more:

Was using AI for my evaluation okay because I shared personal work details and company goals with it?

That’s the ethical question for my basic example. What about the bigger questions?

AI-driven decision-making influences hiring, law enforcement, healthcare, and financial systems. We’ve found that these systems have hidden biases that disproportionately affect marginalized communities.

In security, the question isn’t just how to protect data but who controls it and who gets to access it. VPNs, encryption, and privacy tools exist partly because of an underlying belief that individuals should have control over their digital presence, not corporations or governments with opaque motives.

In the last month, Trump, Musk, and DOGE have raised extreme concerns regarding transparency and ethics in technology and governance. Despite claims of promoting efficiency, critics make the case that DOGE operates with excessive secrecy, undermining public trust and accountability.

While serving as a special government employee, Musk maintains substantial private-sector interests, including ownership of Twitter (or X, right?). This dual role means conflicts of interest. Musk uses his platform to advance governmental policies and target dissenters, blurring the lines between public service and private gain. Actually, there are no lines now.

DOGE’s aggressive cost-cutting measures (where are the numbers showing they’ve actually saved anything?) have rightly been criticized for lacking legal authority and undermining our democratic institutions. State attorneys general have described these actions as an attack on U.S. democracy. They’ve highlighted the absence of ethical considerations in the supposed pursuit of efficiency.

I sent an email to my state’s attorney general, Derek Brown. No response.

These current events underscore the necessity for ethical stewardship in technology and governance. Without transparency and accountability, the integration of private interests into public roles erodes public trust and compromises the integrity of our democratic institutions.

The dangers of “neutral” technology

One tech misconception is that AI and security tools are neutral.

They’re not.

Every algorithm reflects the priorities of its creators, whether intentional or not.

Bias in AI training data leads to discrimination in hiring tools. Governments use surveillance under the guise of security, but a loss of individual freedoms carries the cost.

Accepting the myth of neutrality means ignoring the ethical responsibilities of those who develop and deploy these technologies.

A call for ethical stewardship in tech

Being an “ethical technologist” means making deliberate choices about the technology we create and advocate for. It means pushing for transparency in AI decision-making, prioritizing security measures that protect individual freedoms rather than corporate interests, and questioning whether convenience is worth sacrificing privacy.

I’m well aware of the areas in my life where I’m willing to pay with my privacy. I have an Android phone. My Google apps know far too much about me, but the convenience is worth the cost. For me. I even take Google surveys. They give me a wee bit of change for each one, which I use for purchasing audiobooks. Trade-off.

Still, companies need to be held accountable for how they handle data. AI developers must build systems with safeguards that prevent harm rather than enable it. Will they do it on their own?

Doubtful.

Would I support government regulation in this area? Definitely.

The hand that guides the future

Ethical decisions in technology are rarely dramatic or noticeable. They don’t make for viral TikTok videos. Instead, they’re small choices made every day:

Choosing to document a security flaw rather than ignoring it.

Designing an AI system that prioritizes fairness.

Refusing to contribute to unethical tech practices.

My faith and ethics remind me that technology isn’t about what we can do but what we should do. Human responsibility is the unseen hand guiding it all in a world increasingly driven by automation, security concerns, and AI-powered decision-making.